The big data era has given us the need to analyze and structure an ever-increasing amount of data. Therefore, new models for processing and storing data — data lakehouses — have arisen in response to this data deluge.

Amazon Web Services (AWS) is a forerunner and a top cloud provider in the quickly changing field of digital data management. AWS has revolutionized how businesses handle, analyze, and get insights from the information thanks to its extensive range of solutions and services. AWS has established itself as a reliable partner as organizations struggle with the accumulating amount, diversity, and velocity of data. This cooperation enables businesses to leverage the power of their data for wise decision-making.

Data Architecture Evolution: The Emergence of Data Lakehouses

From conventional data warehouses to the rise of data lakes and ultimately to data lakehouses, the history of data architecture has seen a significant transformation. Each level of this evolution tackles specific requirements and issues brought on by contemporary data management needs.

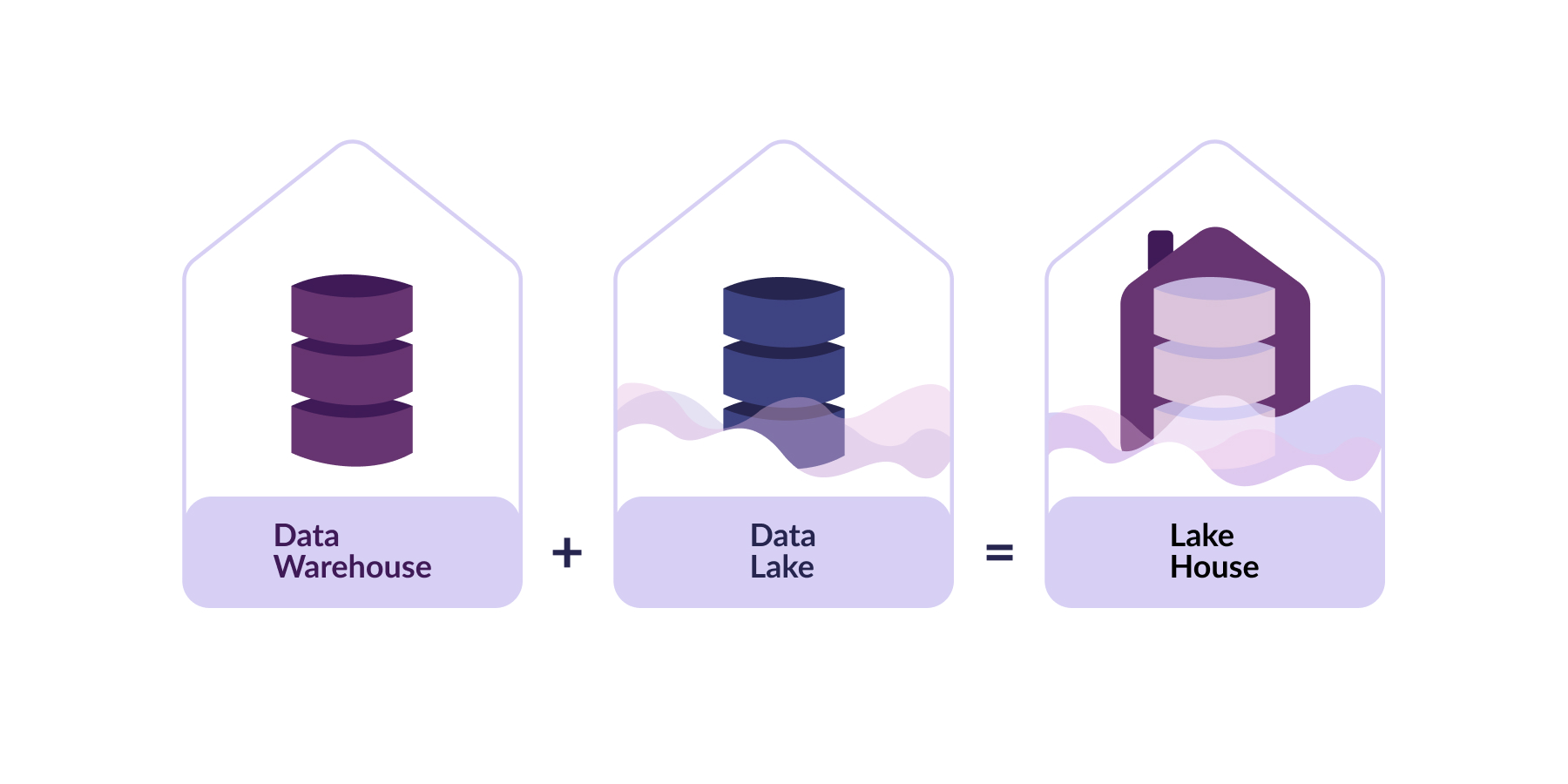

Conventional Data Warehouses

The original purpose of traditional data warehouses was to manage well-structured data. They work well for reporting and conducting queries on particular sets of data. However, given modern companies' data volume, typical data warehouses occasionally need to be more flexible to handle it. Data lakes were developed as a result.

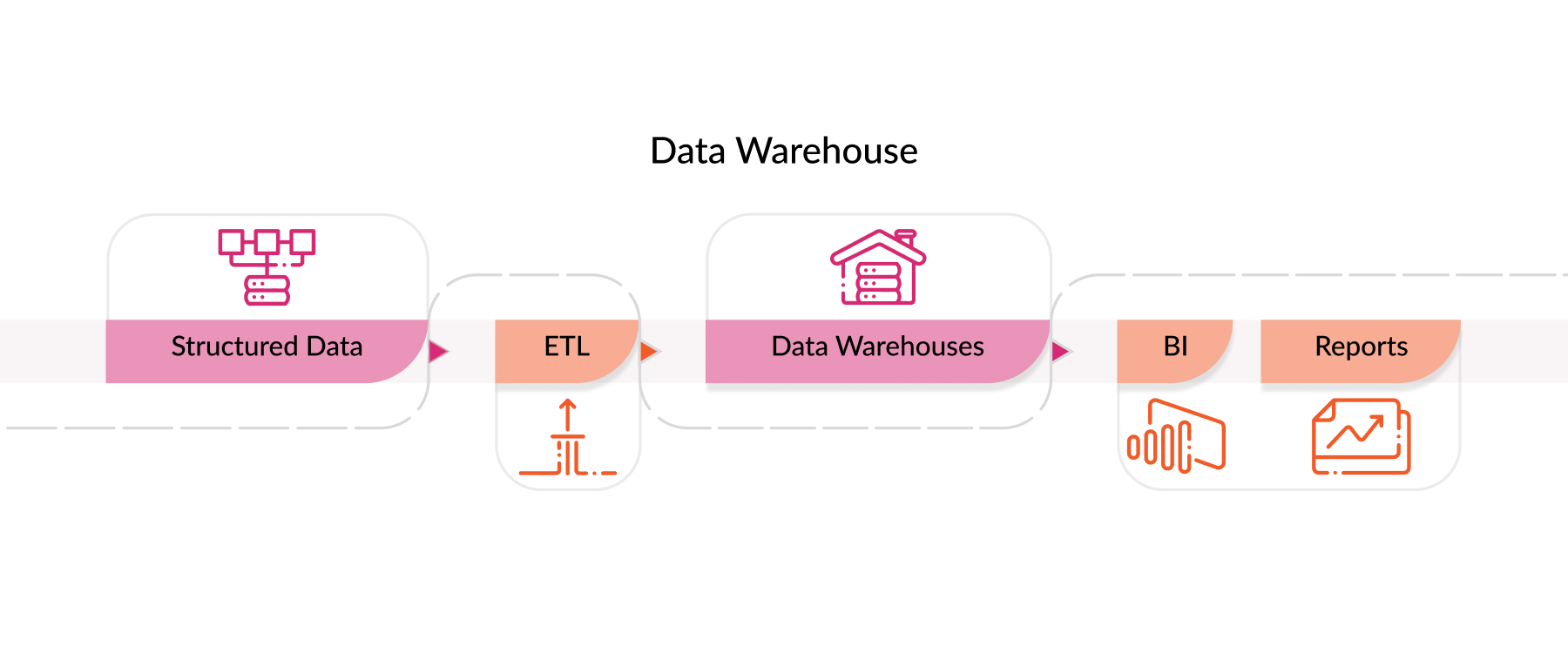

Data Lakes

We can work with numerous types of data thanks to data lakes. They keep information that is chaotic, partially structured, and structured. Unlike typical warehouses, data lakes can store data from various sources. They don't require a specific data format. This adaptability makes massive-scale data exploration, modification, and analysis simple.

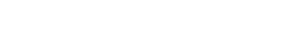

Data Lakehouses

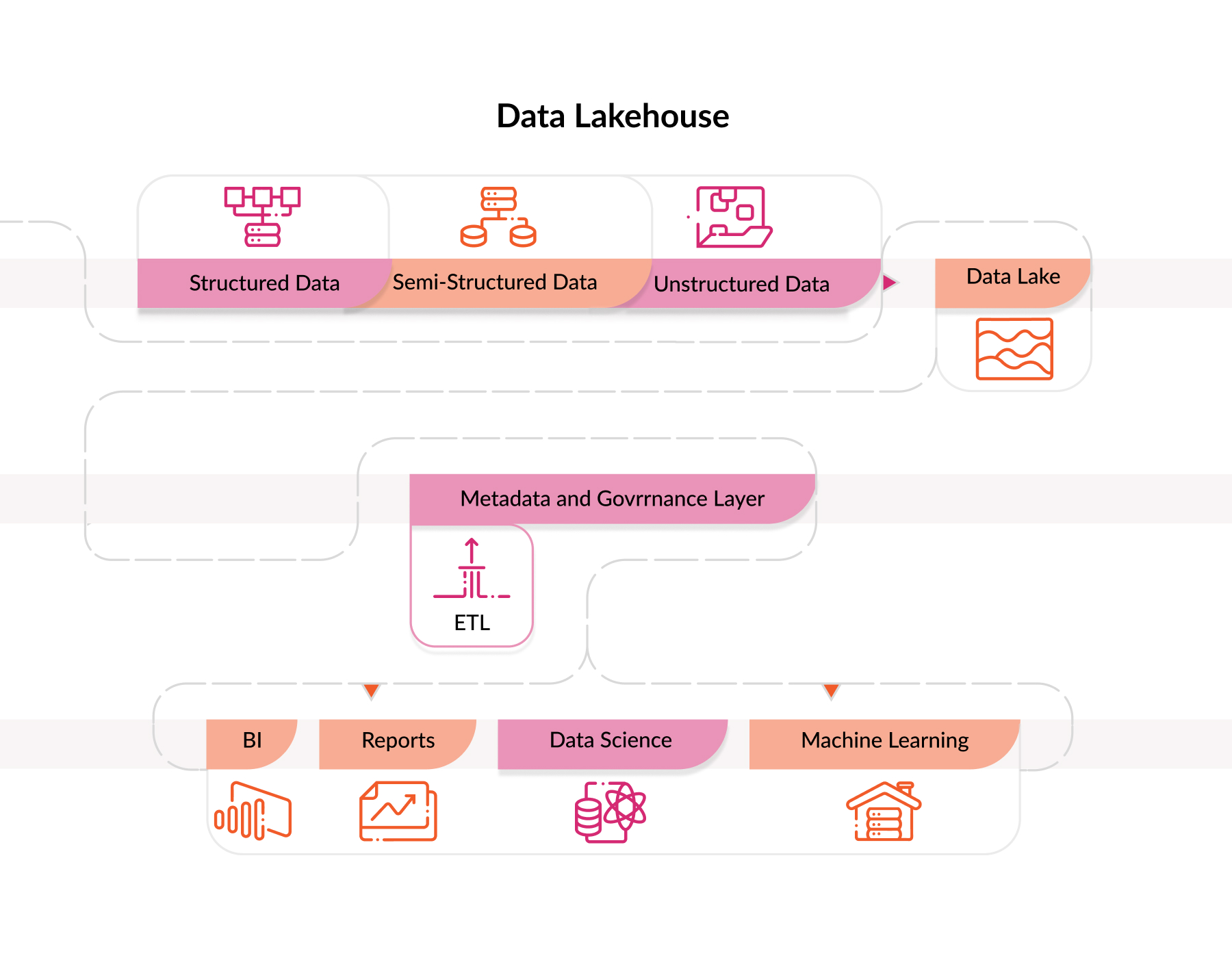

A data lakehouse is a location where unprocessed and processed data may be stored and analyzed. It works well for historical research and analytics in real time. With this data architecture, you receive the versatility, scalability, speed, and structured querying benefits of both data lakes and data warehouses. We can manage vast amounts of data more efficiently with a simplified data structure and no need to move it around.

AWS Data Lakehouse with AWS Services

To build such an architecture on AWS, we must make use of a variety of AWS services. With this architecture, we can develop a solution that covers extensive data processing, storage, analysis, and governance.

AWS S3 – the primary data repository.

An excellent choice for centrally storing all of your data is Amazon Simple Storage (S3). It can manage a lot of information and is simple to use. AWS S3 is frequently the primary storage option for putting up a data lakehouse. Your data is in its original format before the analysis.

AWS GLUE handles ETL (EXTRACT, TRANSFORM, LOAD).

AWS Glue is a valuable tool for getting data ready for analysis. It may gather information from many sources, format it according to your needs and preferences, and then arrange it for data analysis. Additionally, Glue keeps track of the data saved in AWS S3.

AWS REDSHIFT – high-performance structured DATA ANALYTICS.

Amazon Redshift facilitates quick analysis of the big data sets. It works well with data lakehouse setup, where it can efficiently organize and query structured data from the data lake in Amazon S3. Given its many performance-enhancing features and support for SQL-based queries, Redshift is a fantastic option for complex analytics.

AWS QUICKSIGHT – user-friendly data visualization.

Businesses may better comprehend and display their corporate info with AWS QuickSight. It can generate interactive dashboards and reports by connecting to various data sources like AWS S3 and Redshift. AWS QuickSight is intuitive and easy for people with few programming skills. QuickSight turns insights into visuals.

AWS Lake Formation – building data lakehouses.

The work of AWS Lake Formation is to construct and manage data lakehouses. The difficulties of building and maintaining such an architecture can be avoided by offering automated solutions for data input, classification, controlling access, and other activities. With AWS Lake Formation, businesses can quickly create a secure, scalable environment for managing many data sources and doing advanced analytics.

Wrapping Up

Any company engaged in data management and analytics can profit from AWS's extensive functionality, as they can compile a unique and effective data lakehouse architecture. Use a combination of Amazon S3, Amazon Glue, Amazon Redshift, and Lake Formation to facilitate complicated analytics. Create efficient environments that can handle a variety of data types.

This extensive set of AWS tools guarantees flexibility in data management while equipping enterprises with the resources needed for effective data-driven decision-making.

Do you consider data lakehouse as your optimal architecture? Then, our AWS cloud services are exactly what you need. Feel free to get a consultation.